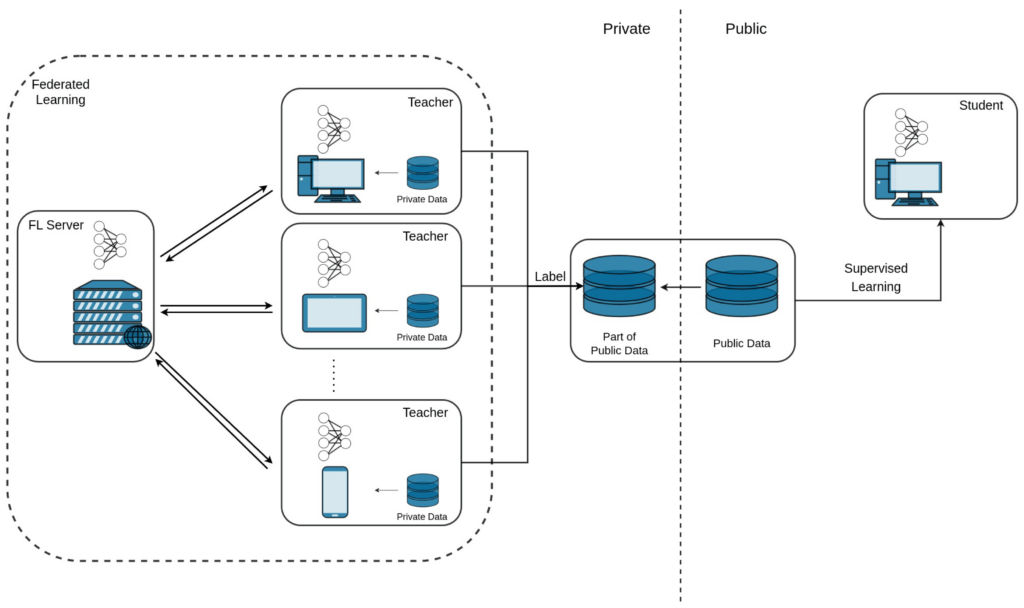

Federated Learning is identified as a reliable technique for distributed training of ML models. Specifically, a set of dispersed nodes may collaborate through a federation in producing a jointly trained ML model without disclosing their data to each other. Each node performs local model training and then shares its trained model weights with a server node, usually called Aggregator in federated learning, as it aggregates the trained weights and then sends them back to its clients for another round of local training. Despite the data protection and security that FL provides to each client, there are still well-studied attacks such as membership inference attacks that can detect potential vulnerabilities of the FL system and thus expose sensitive data. In this paper, in order to prevent this kind of attack and address private data leakage, we introduce FREDY, a differential private federated learning framework that enables knowledge transfer from private data. Particularly, our approach has a teachers–student scheme. Each teacher model is trained on sensitive, disjoint data in a federated manner, and the student model is trained on the most voted predictions of the teachers on public unlabeled data which are noisy aggregated in order to guarantee the privacy of each teacher’s sensitive data. Only the student model is publicly accessible as the teacher models contain sensitive information. We show that our proposed approach guarantees the privacy of sensitive data against model inference attacks while it combines the federated learning settings for the model training procedures.

The authors of this paper are Zacharias Anastasakis, Terpsichori-Helen Velivassaki, Artemis Voulkidis, Stavroula Bourou, Konstantinos Psychogyios, Dimitrios Skias and Theodore Zahariadis.

The whole article can be found here: https://www.mdpi.com/1999-5903/15/9/296